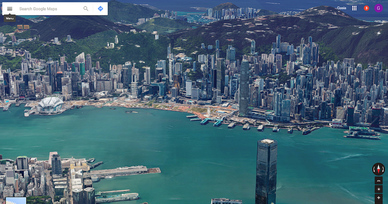

Google's detailed 3D flyover of Hong Kong in Google Earth highlights a developing trend that will accelerate as more and more reality capture takes place and we start to fill up a digital world that mirrors the real world. See Google's A bird’s eye view of Hong Kong. The 3D virtualization of the real world is going to simultaneously make our collective understanding of the world both smaller and larger. That comes with some really cool upsides and a reason to reflect on one extraordinary cause for consideration.

RSS Feed

RSS Feed